This is the third of a four part series on the history of vaccines.

At this moment in history, the advances made in vaccinations began to improve the effectiveness of treating disease. Immunizing the public was becoming accepted socially, to a degree, affecting a larger number of people and saving more lives each year.

In the late 1800’s, several hundred people willingly accepted a new vaccination for diphtheria. It was partially successful, but many doctors had no known method for measuring the dose. Some who received the immunization might have received too little, making them vulnerable to infection. Governments later began to regulate this in order to provide a standard measure and dose.

Only a decade later, typhoid vaccines were used by the British military. This was also an important learning lesson for the government, as contamination would start to appear. There weren’t many regulations in how vaccines were produced or administered, and the risk of additional infections were high. One of the more popular outbreaks occurred in St. Louis, where over a dozen children died from a diphtheria vaccine. The deaths were contributed to the diphtheria vaccine, sourced from a contaminated animal, who was infected with tetanus.

This tragedy led to strict regulations of vaccinations by the federal government, later called the Biologics Control Act. The goal was to “regulate the sale of viruses, serums, toxins, and analogous products.”

Years later, after immunizations became more common, two physicians in the United States found what they called serum sickness, which we now know as allergic reactions. In some people, they would find that the vaccination would cause irritation, swelling, fever, or other adverse reactions.

In 1914, the typhoid vaccine became a standard practice in the United States. And in 1922, the Department of Public Health required School vaccinations from children. All students in a public school would be required to present proof of vaccination. This was just the start of the anti-vaccination movement. The compulsory vaccination of schools gave many parents the motivation to oppose the regulation.

Due to the success of the vaccine, typhoid was almost non-existent among American soldiers during World War II. However, diphtheria would continue to spread and infect nearly 1 million cases in Europe.

Despite the success of vaccinations, especially in the military, it was ironically an Army lieutenant who led a rebellion against the then-risky practice. This man led an armed charge against a group of doctors that attempted to vaccinate the people of Georgetown, Delaware.

The growing opposition could have been a result of the negative results from certain vaccines. As we all know, it’s the squeaky wheel that gets the oil, and in this case, it was the bad vaccines that got most of the attention. Mistakes made in acquiring, storing, and reusing the immunizations would lead to hundreds of deaths in children over the next several decades, all across the world.

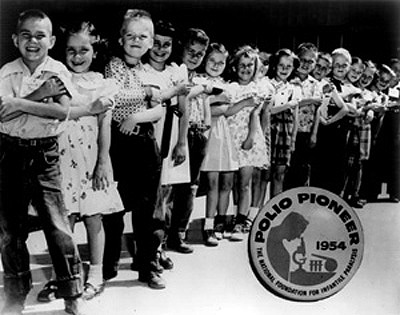

Unfortunately, it didn’t end there. In the 1950’s when the United States began a massive polio vaccine trial, we found positive and yet disturbing results. The polio vaccine resulted in 80-90% effectiveness, but a large number of the children inoculated with the vaccine later had paralyzed limbs (mostly in the arms that were inoculated). This problem took several years to isolate and solve.

As developed countries increasingly adopted the standard vaccines, numbers of infections continued to drop and nearly disappear entirely. In the 1970’s, the World Health Organization (WHO) began a campaign to help poorer countries immunize their young population against several diseases, such as diphtheria, polio, tuberculosis, measles, and tetanus. In 1980, the WHO declared the world free from smallpox. And in 1994, the Pan American Health Organization announced that polio had been eliminated from the Americas.

Tune in for the final post in the History of Vaccines series. We’ll discuss herd immunity, the cholera vaccine, and the future of vaccines to come.